https://us02web.zoom.us/j/5799888090?pwd=L3BKTHF1bEVhU3dkMjlkUWdkeVA3UT09

Month: September 2022

So far, we have built a few VR experiences in the Future Text Lab:

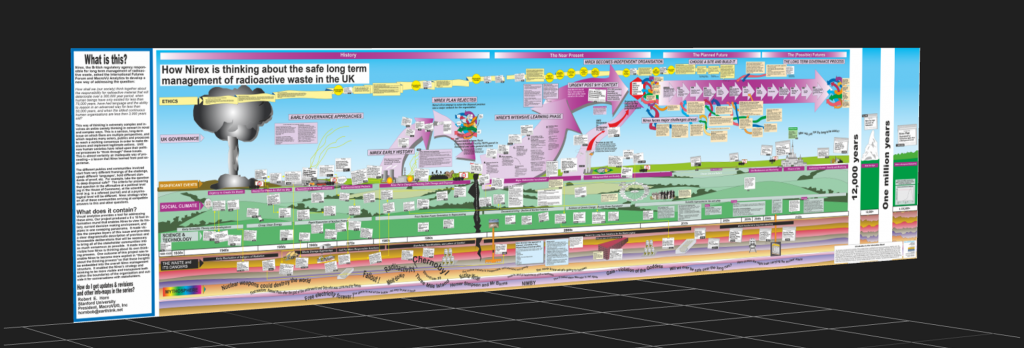

‘Simple’ Mural (By Brandel) A simple and powerful introduction to VR, this shows a single Mural by Bob Horn, which you can use your hands to interact with: Pinch to ‘hold’ the mural and move it around as you see fit. You simply pinch in space and that counts as a hold by the system. If someone says VR is just the same as a big monitor, show them this!

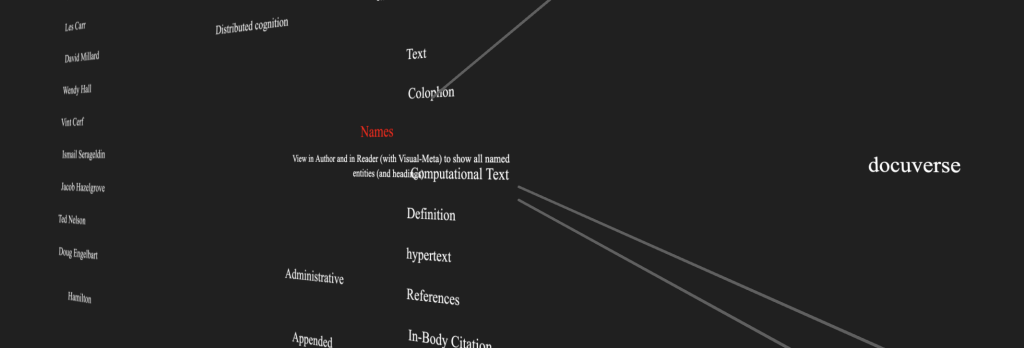

Basic Author Map of the Future of Text (By Brandel) Open this URL in your headset and in a browser and drag in an Author document to see the Map of the Defined Concepts.

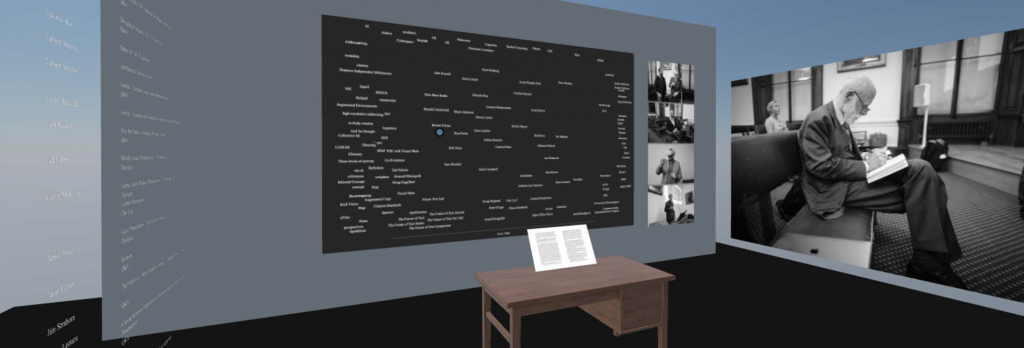

Basic Reading & Graph in VR (by Frode) to experiment with sitting at a desk and interacting with documents in a VR setting.

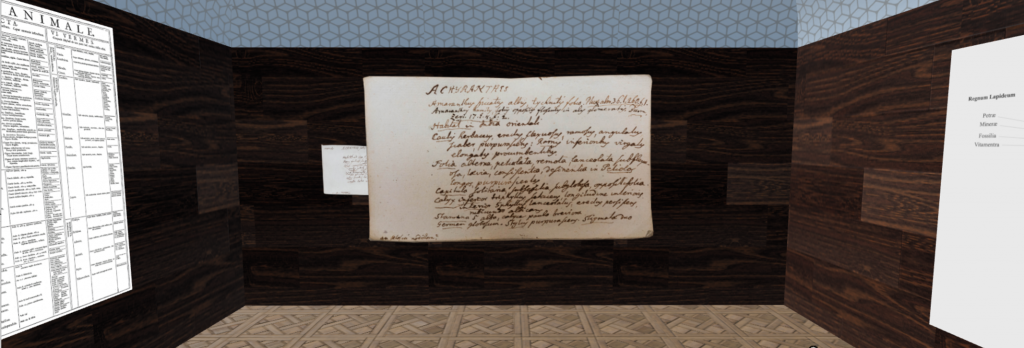

Simple Linnean Library (By Frode) A rough and ready room made by a novice, this is something you can also do. I used Mozilla Spoke to build an experience which can be viewed on any browser, in 2D or VR in Mozilla Hubs.

Self Editing Tool (By Fabien) In this environment you will be able to directly manipulate text and even execute the text as code by pinching these short snippets.

Walkthrough video: video.benetou.fr/w/ok9a1v33u2vbvczHPp4DaE